Article body

Introduction

Most engineering teams can tell you their velocity to the decimal. Almost none can tell you how long it takes a new feature to reach production without requiring a patch within 72 hours. The number they track with precision measures activity. The number they ignore measures whether that activity is building anything durable.

The Comfort of Countable Work

Velocity and story points became standard because they solved a real problem: engineering work is invisible to the people funding it. A product manager or CEO cannot look at a codebase and assess whether the team is moving. Velocity gave leadership a number to watch, and numbers feel like accountability.

The logic is straightforward. Estimate effort, complete work, sum the points, track the trend. If the number goes up or holds steady, the team is productive. If it drops, something is wrong. It is simple enough to fit on a dashboard and legible enough for a board slide.

This framing became dominant not because it accurately describes engineering output, but because it made engineering legible to non-engineers. That is a different accomplishment — and a dangerous one to confuse with measurement.

Velocity Measures Decision Volume, Not Decision Quality

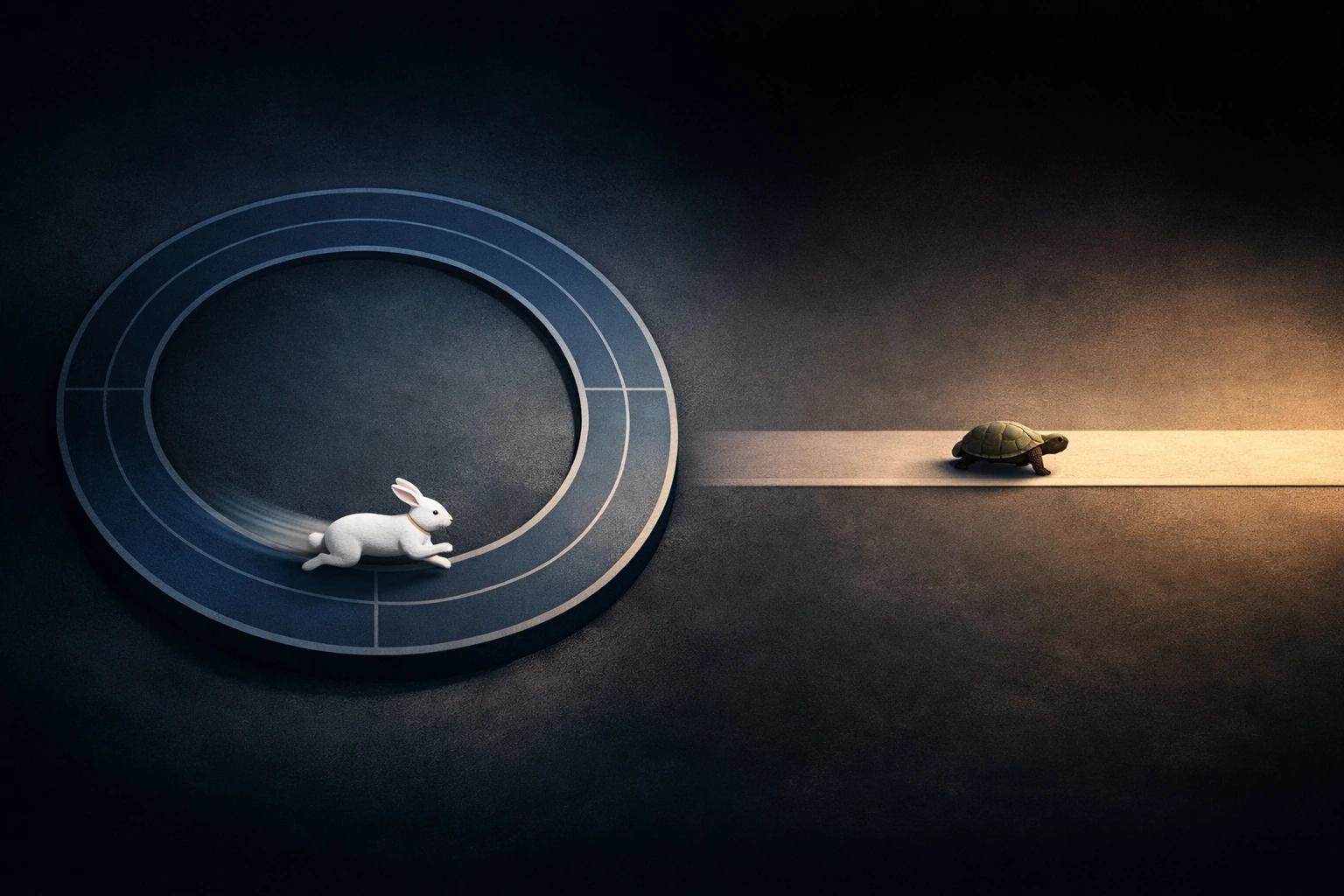

The contradiction is specific: velocity tracks the rate at which an engineering team closes units of estimated work. It does not distinguish between a decision that resolves a problem permanently and one that defers the problem by six weeks. Both register the same on the dashboard. One builds compounding capability. The other builds compounding cost.

When you incentivize decision volume, you get teams that optimize for ticket completion. Not because engineers are cynical — because the system tells them, every sprint, that finishing is what matters. The pull request that ships a feature with a hardcoded configuration value closes the ticket just as cleanly as the one that builds a proper abstraction. The first takes two hours. The second takes two days. Under velocity pressure, the two-hour version wins almost every time — not by mandate, but by gravity.

What Fragility Actually Looks Like From Inside

Consider a backend team running a set of Go services handling payment orchestration for a mid-market SaaS product. Their velocity was strong — consistently delivering 40+ story points per sprint across a five-person team. Leadership saw the chart and saw health.

Inside the codebase, something different was accumulating. Each payment integration — Stripe for US customers, Adyen for EU, a regional processor for Latin America — had been implemented as its own flow, with its own retry logic, its own error taxonomy, and its own webhook handler. Every integration ticket was scoped, estimated, and delivered on time. Velocity reflected the completions accurately.

The cost surfaced when the product team requested a single feature: unified refund status visible to customer support across all processors. What should have been a query and a UI component became a three-sprint project because there was no shared abstraction for payment state. Each processor stored outcomes differently, named failure modes differently, and emitted events on different cadences. The team had built three payment systems wearing the same namespace.

The engineers knew this. They had flagged it in retros, proposed a normalization layer, estimated it at roughly four sprints of work. It kept getting deprioritized — not maliciously, but because the backlog was scored by velocity impact, and foundational work produces low point totals relative to its duration. The system that measured productivity was structurally biased against the work that would have made the team genuinely faster.

By the time the refund feature shipped — late and patched twice — the team's velocity had actually increased for the quarter. The dashboard showed a team performing well. The codebase showed a system where every new cross-processor feature would cost three to five times what it should.

The Metric Teaches the Organization What to Value

One pattern that only becomes visible across multiple teams: velocity does not just measure behavior — it shapes what the organization considers legitimate work. When story points determine sprint success, and sprint success determines team reputation, engineers learn which work counts and which work is invisible.

Refactoring, dependency upgrades, observability improvements, test infrastructure — these produce low or zero velocity. They are definitionally deprioritized in any system where points measure progress. Over time, the organization develops a blind spot precisely where structural health lives.

The deeper problem is that this blind spot is self-reinforcing. As foundational work gets skipped, feature work gets harder. As feature work gets harder, estimates inflate. As estimates inflate, velocity holds steady or even rises — because points are relative to effort, not outcome. The metric can show a perfectly flat line while the system underneath is losing load-bearing capacity every sprint.

The Economic Shape of the Damage

The cost is not evenly distributed across time. Systems optimized for velocity tend to feel fine for 12 to 18 months. Tickets close, features ship, the roadmap advances. The inflection arrives when the product requires something that crosses the boundaries the team drew in haste — a new integration pattern, a data migration, a performance requirement that touches three services simultaneously.

At that point, the accumulated fragility converts into calendar time. Not gradually — in steps. A feature that should take two weeks takes six. The next one takes eight. Engineering starts requesting "hardening sprints" or "tech debt quarters," which are organizational admissions that the measurement system failed to detect degradation until it became acute.

For a Series A or B company, this inflection often coincides with the moment they need to move fastest — entering a new market, responding to a competitor, onboarding a design partner with an urgent timeline. The fragility tax comes due exactly when the organization can least afford it. And because velocity never dropped, leadership has no model for why everything suddenly feels slow.

The most expensive engineering metric is the one that stays green while the system underneath is losing the capacity to change.

Key Takeaways

- Velocity measures the rate of ticket completion, not the rate of durable progress — and the distinction compounds over every sprint.

- Story point systems are structurally biased against foundational work because refactoring, observability, and dependency management produce low point totals relative to their duration and impact.

- Engineering fragility accumulates silently under stable velocity numbers because story points measure effort relative to estimates, not outcomes relative to system health.

- The cost of metric-driven fragility is non-linear — systems feel productive for 12 to 18 months, then degrade in steps when the product requires work that crosses boundaries drawn under velocity pressure.

- Organizations that use velocity as their primary indicator of engineering health develop a self-reinforcing blind spot: the metric rises as the work it ignores makes every future feature more expensive.