Article body

Introduction

The production incident that triggers a war room gets a postmortem, a remediation plan, and a Jira epic. The system that takes nine days to onboard a new API consumer instead of two gets nothing — no alert, no postmortem, no executive attention. One is a crisis. The other is the actual threat. Most software systems are never brought down by a single catastrophic event. They decay, one reasonable decision at a time, until the distance between what the system is and what anyone thinks it is becomes unbridgeable.

Why Catastrophic Failure Gets All the Attention

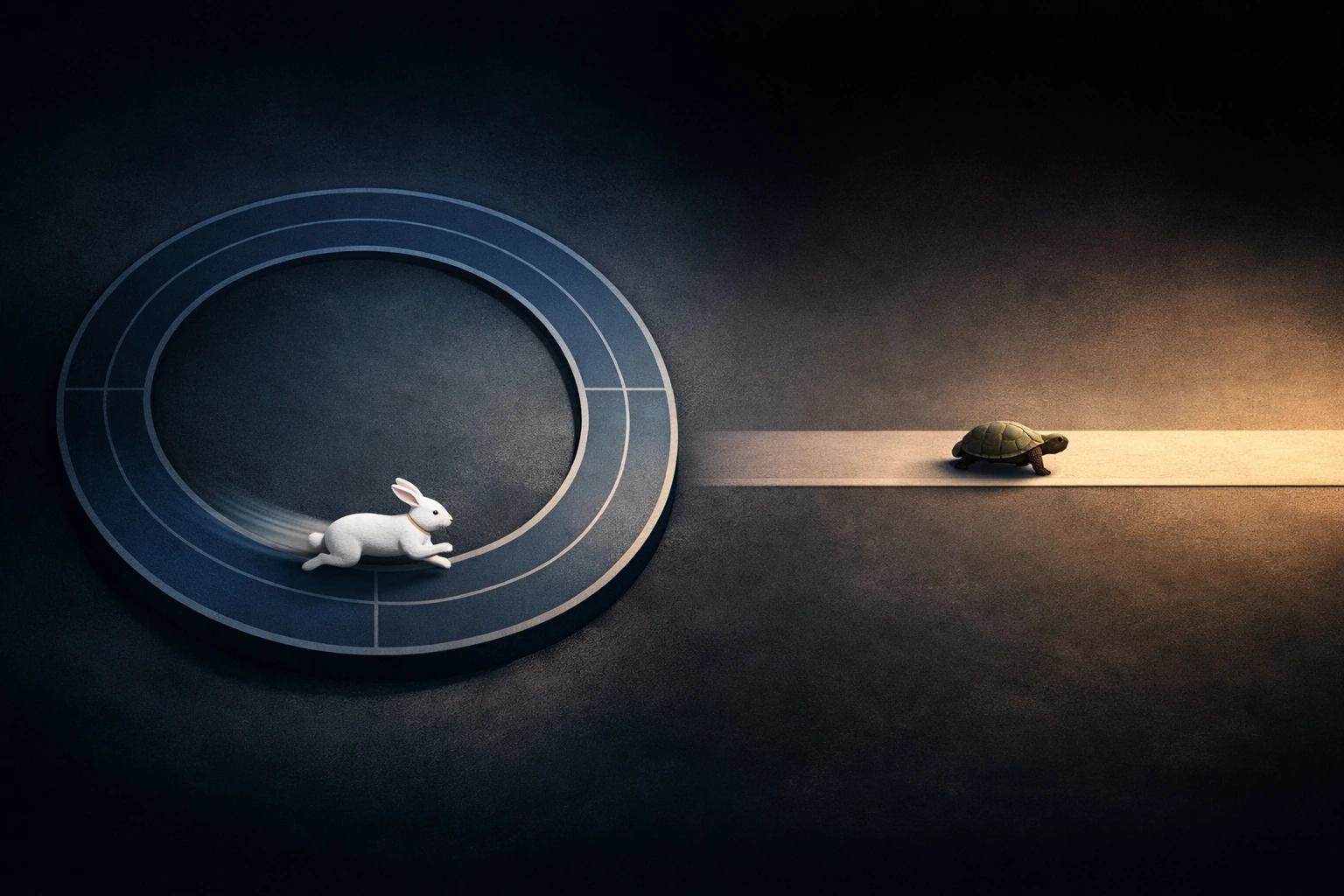

Engineering culture is built around incident response. Monitoring, alerting, on-call rotations, blameless postmortems — the entire operational apparatus is designed to detect and respond to acute failure. Something breaks, a signal fires, people converge, the system is restored.

This infrastructure exists because acute failure is legible. It has a timestamp, a blast radius, and a resolution state. It fits into a ticketing system. It can be assigned, tracked, and closed.

Gradual degradation has none of these properties. There is no moment where a system transitions from healthy to rotting. There is no alert threshold for "this module now takes a senior engineer four hours to understand before making a one-line change." The tooling that engineering organizations invest in is structurally blind to the kind of decay that actually retires systems.

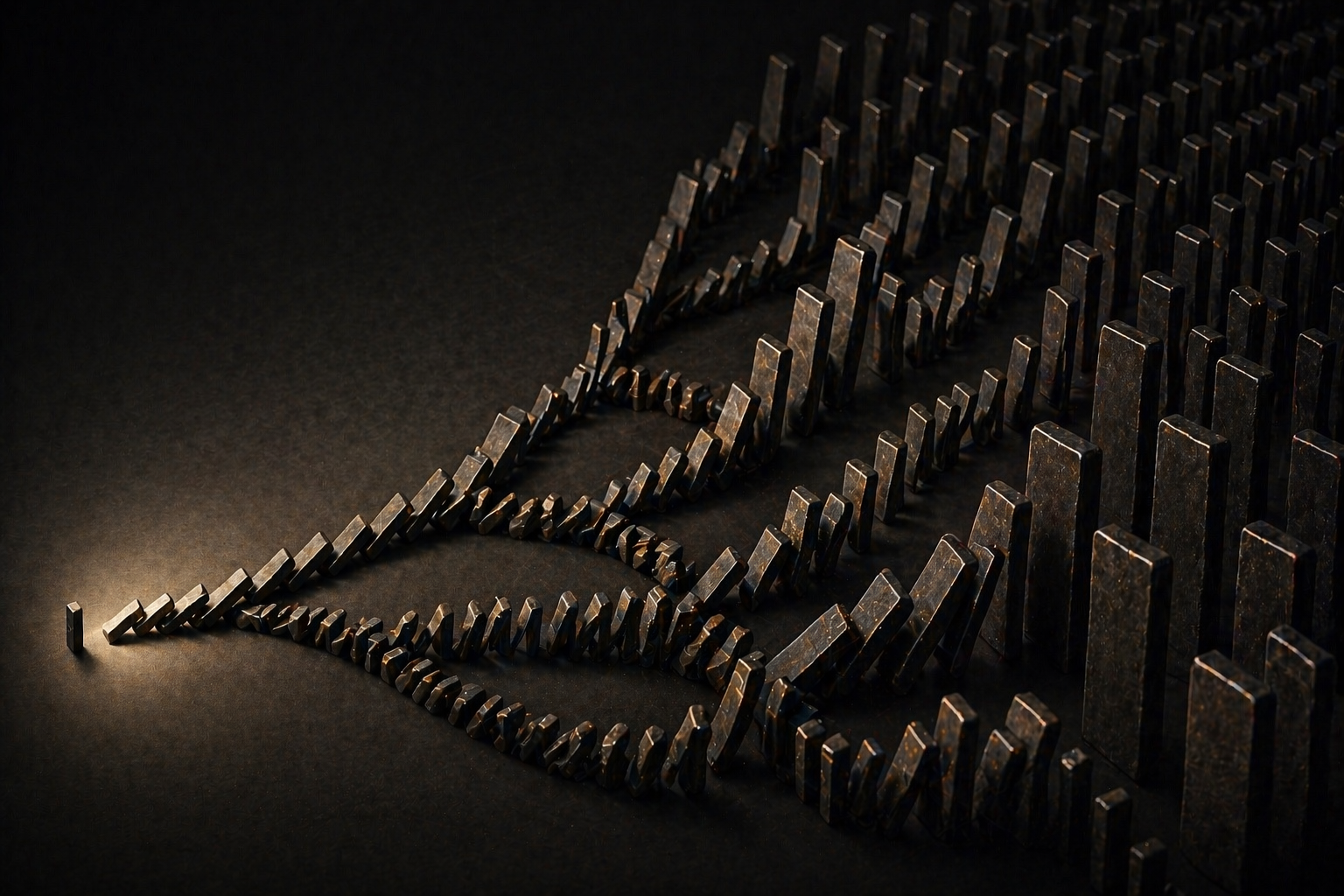

The Rot Comes From Correct Decisions Made in Sequence

The common assumption is that system degradation results from bad decisions — poor architecture, careless engineering, insufficient review. Sometimes it does. But the more dangerous form of rot comes from decisions that were individually defensible, even smart, that collectively produce a system no one can navigate.

This is the specific contradiction: a sequence of correct local decisions can produce a globally incoherent system. Each choice made sense at the time, in the context available, under the constraints that existed. No single commit introduced the rot. No single quarter caused the decay. The degradation is an emergent property of many reasonable choices interacting over time without anyone tracking the cumulative shape.

This makes rot fundamentally different from failure. Failure has a cause. Rot has a history.

The Archaeology of a Request Path

A mid-stage B2B platform — roughly 60 engineers — ran its core product on a Node.js API layer that served both a React frontend and a growing set of third-party integrations. The system was healthy at launch. Two years later, it was technically functional and practically opaque.

The archaeology was legible in the request path. The original API had been designed around REST conventions with clean resource boundaries. Then a mobile client needed a subset of fields with lower payload sizes, so a middleware layer was added to transform responses based on a client-type header. Then a key enterprise customer required field-level access controls, so an authorization decorator was introduced that ran after routing but before the existing validation middleware. Then the integrations team needed webhook-style push notifications triggered by specific state transitions, so lifecycle hooks were grafted onto the controller layer.

Each of these additions shipped on time, passed review, and solved the problem it was designed to solve. None of them were mistakes. But after two years, a single API request traversed: routing, client-type detection, conditional field filtering, authorization decoration, validation, business logic, lifecycle hook evaluation, response transformation, and audit logging — with different subsets of this chain activating depending on the caller type, the resource being accessed, and the customer's entitlement tier.

No one on the team could hold the full request path in their head. New engineers were onboarded by being told which parts of the chain they could safely ignore for their current task. Bug investigations routinely took longer than fixes because tracing a behavior required understanding which combination of middleware was active for a given request context. The system was not broken. Every endpoint returned correct responses. But the cost of understanding had grown faster than the team's capacity to maintain shared context.

What Rot Reveals About How Teams Model Their Systems

The gap that rot exposes is not between intention and execution — it is between the system's actual state and the team's mental model of it. Every engineer holds a simplified map of the system they work in. That map is built from the parts they touch regularly, the documentation that exists, and the conversations they overhear. As the system accretes complexity, each engineer's map drifts further from reality in different directions.

This is where rot becomes self-accelerating. When mental models diverge from the system's actual behavior, engineers make changes based on assumptions that are slightly wrong. Those changes introduce subtle inconsistencies. Those inconsistencies make the next engineer's assumptions slightly more wrong. The system is not degrading because people are careless. It is degrading because the complexity has outpaced the team's collective ability to reason about it accurately.

The dangerous insight is that confidence does not track with accuracy. The engineer who has been on the team for 18 months feels fluent in the codebase. Their model is detailed and internally consistent. It is also increasingly fictional — not because they are wrong about what they know, but because the surface area of what they do not know has grown without any signal telling them so.

The Option That Closes Quietly

The terminal cost of rot is not slowness or frustration. It is the silent closure of the incremental repair path.

Every system has a window during which targeted refactoring can arrest decay. Extracting a module, introducing an interface boundary, consolidating duplicated logic — these interventions work when the surrounding code is still comprehensible enough to refactor safely. Rot narrows this window continuously. Each month of accumulated complexity makes targeted intervention harder to scope, harder to test, and harder to justify — because the system still works.

By the time the rot becomes visible to leadership — typically when a strategic initiative stalls because the underlying system cannot accommodate it — the incremental path has often closed. The remaining options are a high-risk rewrite or an ongoing tax on every future feature. Both are expensive. Neither was inevitable. The window for the cheaper intervention simply passed without anyone noticing it was open.

Organizations that monitor uptime, latency, and error rates are watching for the system to break. The system that rots never breaks. It just gradually becomes something that can only be operated, never evolved.

Key Takeaways

- Most software systems are retired not by catastrophic failure but by gradual decay — a process that existing monitoring and incident-response infrastructure is structurally unable to detect.

- A sequence of individually correct engineering decisions can produce a globally incoherent system when no one tracks the cumulative effect of those decisions on system comprehensibility.

- System rot is self-accelerating: as complexity outpaces the team's mental model, each change introduces subtle inconsistencies that make future changes less predictable.

- The most consequential cost of rot is the silent closure of the incremental repair window — the period during which targeted refactoring could arrest decay at manageable cost.

- Systems that rot do not trigger alerts or postmortems, which means the organizational response is structurally delayed until the only remaining options are expensive and high-risk.